Ok, we're almost 2 months into the new year, so we're not exactly starting it anymore. By now, you're already feeling guilty about not using that gym membership you bought on January 2nd, and you've slipped more than once on your resolution to make the bed every day. (Every. Day.) So here's a chance to make a new New Year's resolution: stepping up your QA processes. This guide will help you better understand your team and make better decisions based on the domain you’re working in, then analyse your progress and make further changes based on what you've learned.

Maybe you’re reading this and thinking "Our QA game is just fine, thanks." And maybe it is. Maybe. But let me ask you this: Have you recently gone through a bad release? Are you just "hoping" it will go better next time? Most of the time you move from release to release, without any reflection on how your QA process is doing or even know how to measure it – which is a pretty good definition of "insanity". So enough hoping. Let’s have more doing.

1 – Analyse your team

The first step is to analyse the team. I'm not just talking about the people on your team, but also the type of work you're doing and the whole domain you're working in. Is the work involved very front-end or back-end heavy? Do you rely on external APIs? Will you be integrating with other systems? Do you have a code dependency on other teams?

Let’s assume your project involves integrating two existing systems within your organisation, working with two or more teams. The work will be mainly front-end oriented but there's some initial back-end work to get done.

All teams involved are currently shipping on different cadences and following similar agile methodologies. Experience of members within the team is mixed from junior to senior level developers.

So to take away what we’ve found about this new project:

-

Team members might need to learn new codebase/systems

-

Front-end heavy will mean cross-browser testing

-

Multiple teams with different dev process are warning signs – testing the code version accuracy of multiple systems will require careful co-ordination of dependencies from the different platforms

-

Communication could be a barrier between the two teams

2 – Implement

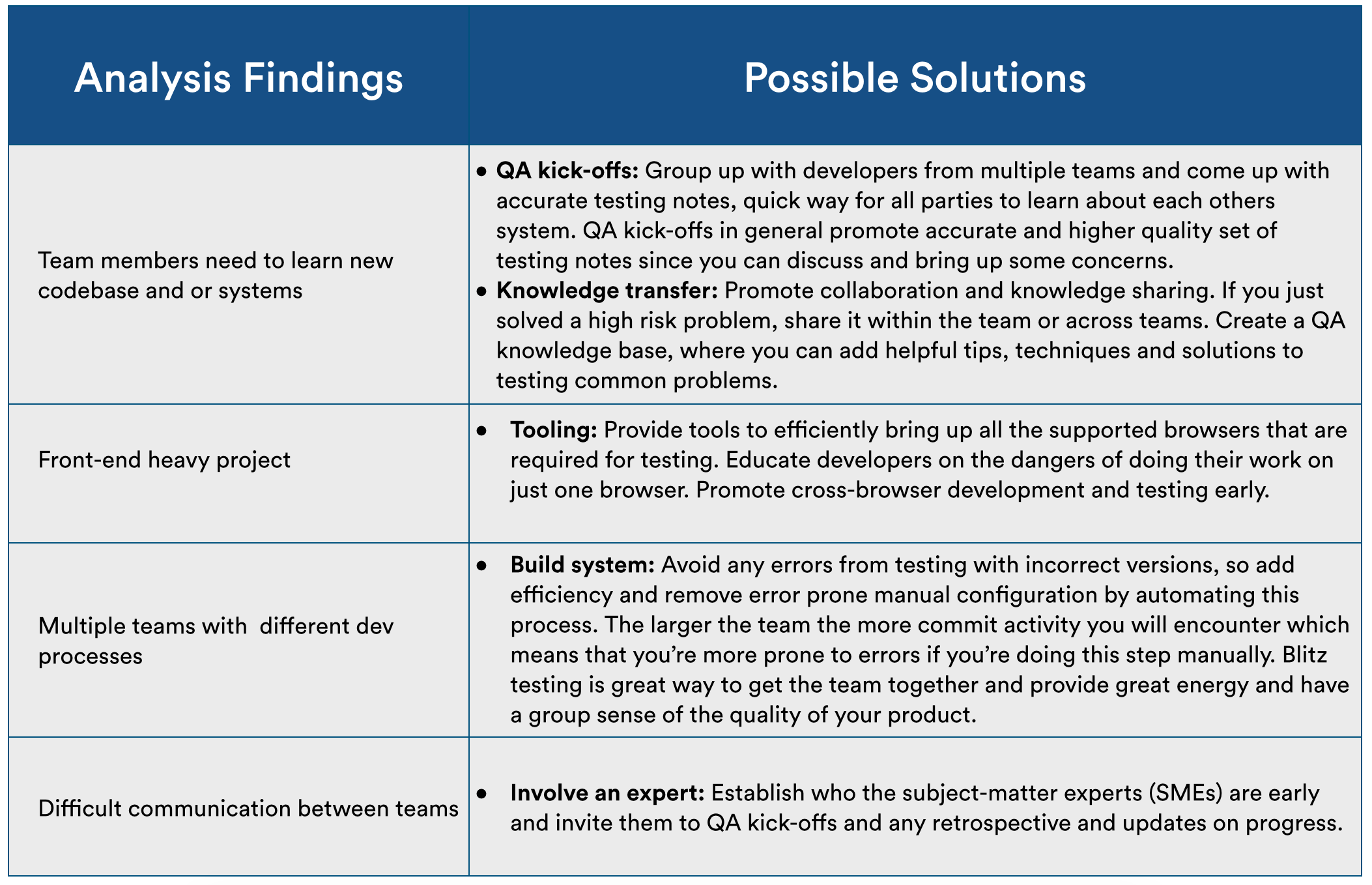

Based on the analysis, you now have a better understanding of how your team works and what could be some of the high-risk areas that you need to focus on.

This is an example of some of the processes improvements you can implement. If you're interested, check out a more in-depth explanation of the various QA processes we use at Atlassian.

3 – Automate

Automation is a vital part in achieving fast development and testing speed. A great deal of developer and/or tester time could be wasted by constantly having to set up a test environment.

Automation not only offers efficiency to your development team but could also decrease mistakes by having to do too many things manually.

Test environments

Have an infrastructure where your team members can quickly bring up a test environment with similar architecture to what the end users will be using.

Data populators or importers

Your test environment needs to have a wide range of data, for example i18n characters, XSS attack strings, large string lengths, images, or documents – whatever content your product is designed for. You don’t want to be entering this data every time you test.

Smoke Tests

What are these? Smoke tests are a subset of tests which cover vital functionality of your application that if failed will block your team from shipping.

The proper way to have a suite of effective smoke tests is to have them running against an environment which gets upgraded. Not cleaned up every time and then upgraded with the latest code. Because the end user is not going to blow away their whole data and upgrade their system, right? So you need to replicate this process with some automation tests which test the core functionality of your product.

Having a good suite of smoke tests reduces the amount of manual testing required and allows your team members to test the specific features that have been added. You should also add these tests as part of your release process as a kind of exit criteria.

Dashboards

Use a dashboard to display various information to your team that help them work on a daily basis. Know how many bugs have been raised during the sprint/iteration? Status of builds? Any actions or warning signs? A properly configured dashboard is a very effective tool and most are very easy to connect to your development tools.

There are several very good free dashboards out there. Atlasboard is one of these, created in-house here at Atlassian. Another dashboard option is dashing.

Learn a scripting language

All the above tools can be done with a scripting language. I recommend learning Ruby or Python since both are great, easy-to-learn languages with large libraries that do almost anything.

Getting developer time to help you on these tools is usually difficult, so take the first step and learn to code.

4 – Measure, measure, measure

How do you know the above steps you have implemented are providing any benefit?

Some of the metrics you'll want to check on a frequent basis include: (usually aligned with your sprint iteration)

-

Speed of development (number of stories completed)

-

Number of bugs

-

Severity and types of bugs found

-

Where in the development process are these bugs caught?

Make sure you have your metrics well-defined (otherwise your numbers will be meaningless).

5 – Fine-tune the QA engine

It’s not enough just doing the above steps and thinking it’s all smooth sailing. Sometimes it can take a couple of times tweaking these steps before you get it right.

So from measuring your team, you now have a better picture of how the team is progressing. I would also recommend holding retrospectives within your team, getting everyone to raise good and bad points, debating on those and coming up with some actions.

Finally, remember to think of the QA process as a living organism within your team: you need to keep a constant eye on it to make sure it’s healthy.