At Wittified, all of our products rely on one of a couple add-on development frameworks provided by Atlassian.

Many of them were built using Atlassian’s long-standing Plugins 2 (P2) framework. These are server based add-ons, because they run locally on the customer’s server. As an add-on vendor, that’s one of the initial benefits about P2 add-ons: wherever the customer elects to host their Atlassian tools, that’s where all their P2 add-ons are hosted as well. Seems like an obvious benefit. An easy choice for the developer, right? Well, not exactly.

Since P2 add-ons run on the customer’s server, add-on developers usually have very little access to real time insights. This can make it challenging to quickly address small issues before they become larger problems. It can also mean a whole lot of environment-specific troubleshooting when there are problems.

In other words, by avoiding an investment in hosting you may pay a whole lot more in terms of support.

Enter Atlassian’s latest development framework: Atlassian Connect.

Atlassian Connect resolves many of the challenges around real time insight by giving developers the ability to host their own add-on functionality. Rather than rely on each customer’s server, the add-on’s core logic is hosted centrally by the developer, and the communication with Atlassian’s tools (JIRA, Confluence, etc.) is handled through HTTP calls. It’s a fundamentally different way of building add-ons, and if it doesn’t sound exciting, it should. That’s because it can provide a whole lot more control over your code and it can make supporting your add-ons a heck of a lot more efficient.

These big benefits require some investment. But is the investment really that big?

Some developers may overlook Connect because of this hosting requirement. They may think “Can I really host my own add-on without breaking the bank?” or “Can I sell a cost effective add-on for Atlassian Cloud and still make a profit?”

By the end of this post not only do I plan to convince you that you can, but that you should!

PaaS vs IaaS

At first glance, Heroku, Open Shift, or other PaaS (Platform as a Service) solutions may seem like a no-brainer to quickly get started. A common benefit of PaaS services is that they can manage the full platform for you. They also offer a compelling guarantee: that they’ll keep the service up and running for you. But like most things, there’s another important (and often costly) trade-off.

That guarantee can quickly turn into an expensive proposition once your add-on goes into production and you need to start scaling. You grow some customers, the platform grows with you. Great. But remember, the cost of that guarantee grows very quickly as well. In my opinion, often much faster than necessary!

Looking closer, there can be other unforeseen costs as well. One of the ways

PaaS services are able to get you up and running so fast is by requiring that you adopt their standards and conventions. This means that moving from one service to another can cause some serious conversion headaches beyond the additional cost of the next service.

On the other side of the spectrum are IaaS (Infrastructure as a Service) solutions like Amazon, DigitalOcean, Rackspace, Linode, and others. If you’re willing to apply a little bit of effort to stand up your own server and maintain it, this is where you can save a bunch of money!

So for the purpose of this article, that’s exactly what we’re going to do.

Before we continue though, a quick disclaimer. This write up describes just one way of doing things. It’s definitely not meant as an “end all, be all”, or a “one size fits all” by any means. As with any endeavor, always do your own research before implementing anything new (especially when it comes to security).

Choosing a service provider

For the purpose of this post, we’ve selected DigitalOcean as our service provider. (However, we could have just as easily used Linode, Amazon, or Rackspace. The instructions below would be pretty much the same.)

We’re going to set up a very basic Atlassian Connect application using a Node.js application and the Atlassian Connect Express framework.

If you’d like to follow along, first you’ll need to create a DigitalOcean account. Once you’ve created your account, you can upload an SSH key to streamline further password requirements. (We’re already using SSH keys when using Bitbucket, right?)

Creating the application

Next we’ll want to create a basic artifact to upload. For the purpose of this post, you can grab a very basic Atlassian Connect Express (ACE) application over here. (It’s literally just a basic ACE application without any modifications.)

In order to prepare it for installation somewhere, we have a basic deploy.sh in the root:

#!/bin/sh

npm install

rm app-$1-*.tar.gz

tar zcvf app-$1-$2.tar.gz *

When the deploy.sh is triggered with both an application name and a numerical ID (such as a build number) the output is a versioned tar.gz file, which can be sent off to a server (or two) and then unpackaged there.

Creating a server

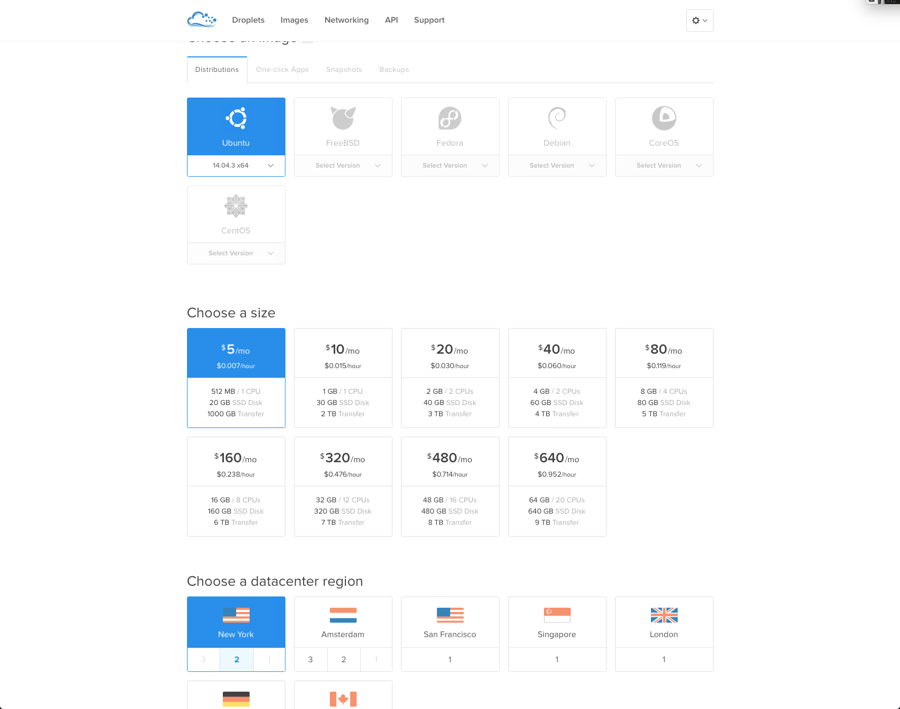

We’ll now need to create a basic server called a “droplet” (in DigitalOcean’s vocabulary). Click on the Create Droplet link at the top of the DigitalOcean control screen and you should see the following options:

We’ll need to give the droplet a hostname. Since we’re focused on the Node.js application in this case, we’ll add a suffix of -app1. Select the $5 host option (1 cpu and 512MB memory) and pick any of the New York locations. For context, the Atlassian Cloud host applications are currently up in the US north east (as of the writing of this article at least) so that would theoretically be the closest spot.

Next, select the distribution you would like. For this post, we’ll select Ubuntu 14.04. (Note that the rest of this post is based on our selection of Ubuntu 14.04, so if you pick anything else you’ll just need to convert the commands and examples below.)

Make sure to enable Private Networking. That way you won’t have to go outside the datacenter, unless you absolutely need to talk to your other servers. Note that we’re not going to create the database just yet, so we won’t enable backups at this stage.

Finally, click Create Droplet and wait. (Depending on your variables, creating a new droplet can take 30 seconds or more.)

Setting up the application server

Let’s go ahead and SSH into the server to start setting it up.

One of the easiest ways to install Node.js on Ubuntu is by using the apt-get command. However, it’s important to note that you may get an older version. For this example, we’re going to do it that way, though I would recommend reading this article if you would like more control. In addition, we’re going to install the excellent PM2 Node.js library which will keep things running for us.

apt-get install nodejs -y

apt-get install npm -y

apt-get install nodejs-legacy

npm -g install pm2

Configuring the application server

Ok, now we can start configuring things. First, we’ll stop running as root. (Never, ever, ever run an application as root!)

Let’s create a nodejs user that will run our application for us.

useradd -m nodejs

mkdir -p /opt/apps/active

mkdir -p /opt/apps/releases

mkdir -p /opt/apps/deploys

mkdir -p /opt/apps/bin

chown -R nodejs /opt/apps

This directory structure allows us to keep our released versions in /opt/apps/releases and establish an active symlink with /opt/apps/active. We’ll place all of our utility scripts in /opt/apps/bin – the first of whichis activate.sh.

#!/bin/sh

mkdir /opt/apps/releases/$1-$2

cd /opt/apps/releases/$1-$2

tar zxvf /opt/apps/deploys/$1-$2.tar.gz

rm -f /opt/apps/active/$1

ln -s /opt/apps/releases/$1-$2 /opt/apps/active/$1

pm2 restart $1

Next, we’ll need to make this executable:

chmod +x /opt/apps/bin/activate.sh

Now let’s start up the app!

Go ahead and upload the tarball from earlier into /opt/apps/deploys. Switch to the nodejs user with:

sudo su - nodejs

Then execute the following:

./activate my-app 1

You should see things being untarred and then end with: `[PM2][ERROR] Process my-app not found`

Let’s now configure the environment variables that the application will need in order to run. In this case we’ll set the environment to be DEVELOPMENT. (If there are any other similar environment variables you’d like to set, this would be a good time to do so as well.) The public-key and private-key are your RSA keys generated specifically for this add-on by utilities such as JSEncrypt.

export environment=DEVELOPMENT

export AC_PUBLIC_KEY='your-public-key'

export AC_PRIVATE_KEY='your-private-key'

Then start up the application with:

cd /opt/apps/active/my-app

pm2 start --name my-app app.js

You should be presented with something like this:

┌───────────────┬────┬──────┬──────┬────────┬─────────┬────────┬────────────┬──────────┐

│ App name │ id │ mode │ pid │ status │ restart │ uptime │ memory │ watching │

├───────────────┼────┼──────┼──────┼────────┼─────────┼────────┼────────────┼──────────┤

│ my-app │ 0 │ fork │ 4279 │ online │ 0 │ 0s │ 4.746 MB │ disabled │

└───────────────┴────┴──────┴──────┴────────┴─────────┴────────┴────────────┴──────────┘

Use `pm2 show <id|name>` to get more details about an app

You should also be able to access the application on port 3000.

Before we finish with the application server though, there’s one more thing to do there. We need to make sure it always starts! Go ahead and execute the following as root:

sudo pm2 startup ubuntu

Now whenever the server dies, PM2 will restart. PM2 will then restart your application whenever it dies. Good stuff!

We’ll need a web server

We still need something very important. We’re creating an Atlassian Connect add-on and that requires HTTPS. In order to do that we need a SSL proxy. My personal preference is NGINX.

Let’s head back over to DigitalOcean’s [droplet creation UI](https://cloud.digitalocean.com/) and create another basic droplet for $5 a month (512MB, 1 CPU). Once that’s been created we’ll install the web server.

Edit the /etc/nginx/sites-enabled/default and change it to the following, replacing ip-from-app-server with the ip address of the application server we created earlier:

upstream app { server ip-from-app-server:3000; }

server

{

listen 443 ssl;

listen 80 ssl;

server_name app.my-app.com;

location / { proxy_pass http://app; }

}

We’re now running on port 80, but we still need to add in SSL. Luckily there’s an open effort that’s growing in popularity called Let’s Encrypt that provides free certificates. This will be perfect for our example, though later on you may still want to get a traditional certificate. This page should provide you with the necessary instructions to follow. Once that’s in place, just restart NGINX and we’re almost done.

Now we just need to button up our DNS.

Buttoning up our DNS

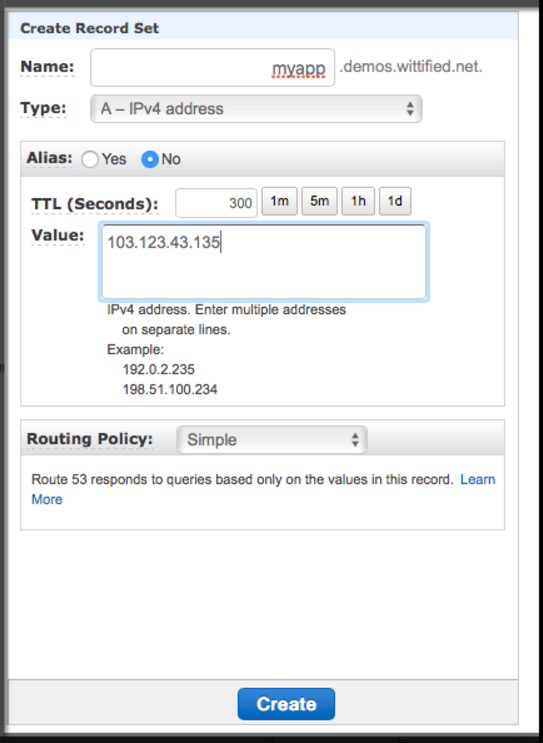

Luckily Amazon Route 53 is really cost effective and easy to use. (There are some other great options out there though, so feel free to shop around.)

Depending on your Time To Live (TTL) requirements and how many users you have, for a basic application you’re probably looking at less than $5 (though your final cost may vary a bit). Not bad!

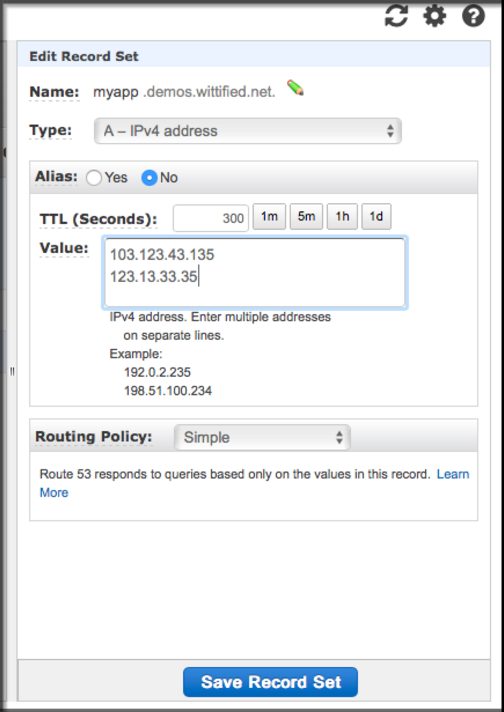

For this example, let’s head on over to Amazon Route 53 and create an A record for the IP address of the webserver with your desired DNS entry.

Time for the database

We’re now almost done with the application set up. We just need to store the data somewhere.

Time to head back to our trusty DigitalOcean account to create another $5 droplet. This time let’s setup Postgres on it.

apt-get update

apt-get install postgresql-9.3

Next, let’s create the database we want and give it the necessary permissions so that our Node.js application can use it.

root@blog-db1:~# sudo su - postgres

postgres@blog-db1:~$ psql

postgres=# CREATE ROLE myaddonuser WITH LOGIN PASSWORD 'myaddonp@ssW0rd'

VALID UNTIL 'infinity';

postgres=# CREATE DATABASE myaddondb with ENCODING='UTF8' OWNER=myaddonuser;

You’ll also need to add your application server’s IP address to /etc/postgresql/9.3/main/pg_hba.conf so that the application has a chance to authenticate. Then, head back to the application server and update your Node.js application to use the new credentials.

Safety in numbers

Ok, so far we’re spending about $20 per month. Wait? That’s all?

Almost. We’re on our feet, but we don’t have any real safety nets in place just yet. Thankfully, that’s a really easy one to resolve!

Creating images

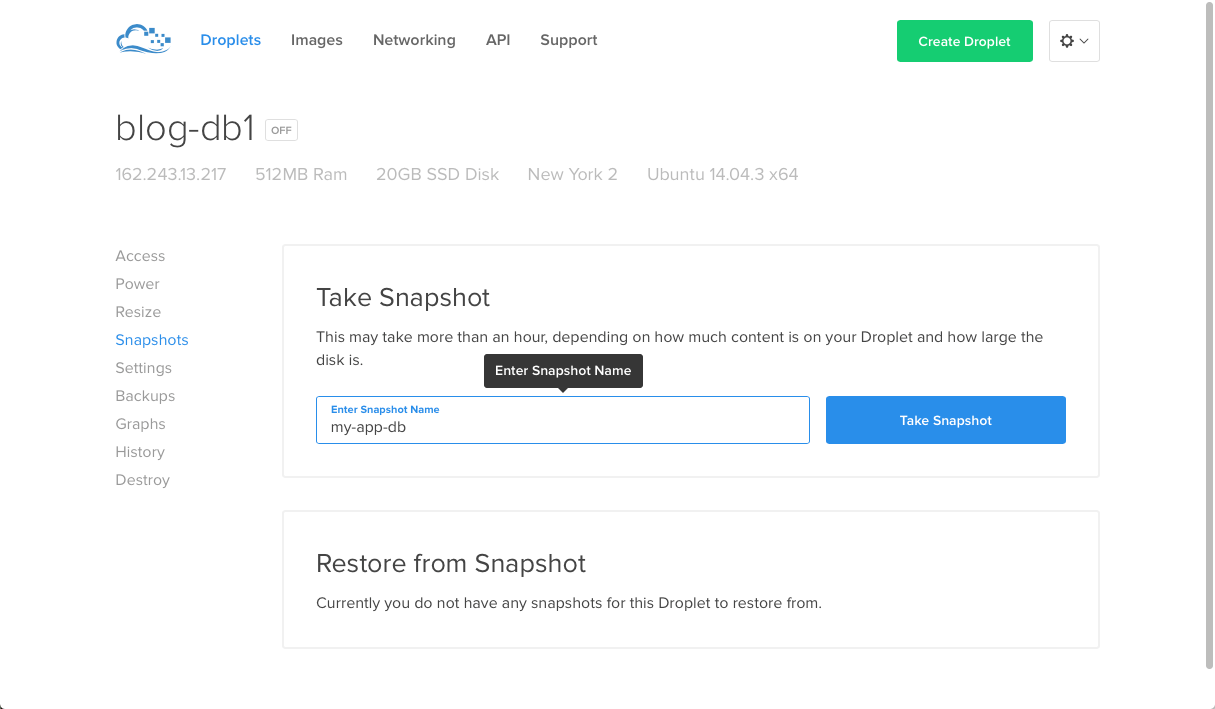

First, we’ll need to shutdown both the application and the web server using the regular linux command: sudo shutdown -h now

Then, we’ll head into DigitalOcean’s droplet interface. Within each of the droplets (database, web and application server) select the option to take a “snapshot” of each of them. This will provide you with an image of each. Once that’s done the servers will come back online again automatically.

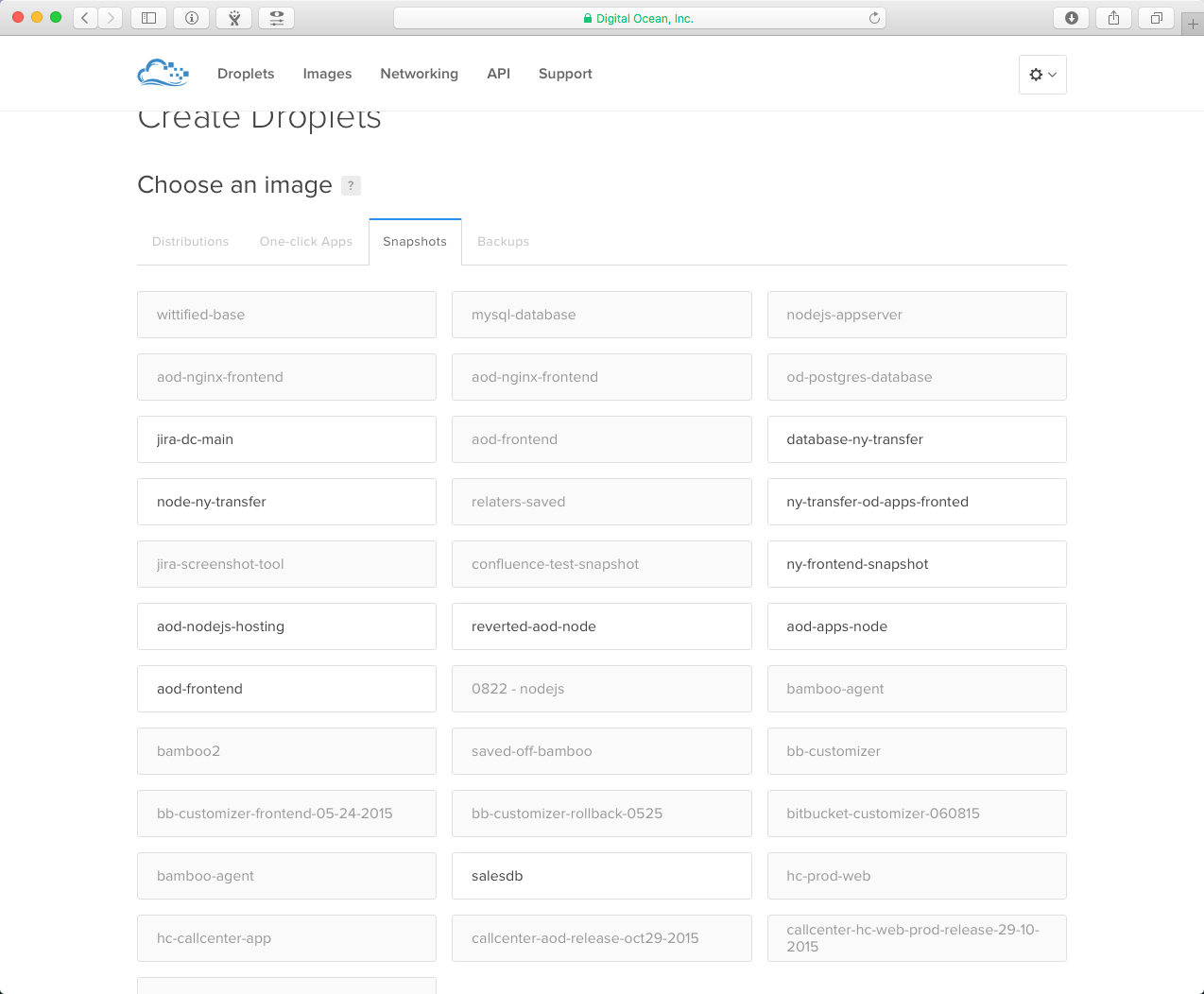

Next, let’s double down on our capacity. Within DigitalOcean, go ahead and create a few more droplets for app2, webserver2, and db2 – but for each of these we’re just going to use the snapshots that we just created, instead of just plain Ubuntu.

Once those have been created, we’ll need to do the following:

Adding the application server

Go ahead and SSH into the webserver and edit the /etc/nginx/sites-enabled/default file. We’re going to add the IP address of the application server right underneath where it says: *server ip-from-app-server:3000;*. The result should look like the following, making sure ip-from-app-server and ip-from-app-server2 are replaced with the appropriate numbers:

upstream app

{

server ip-from-app-server:3000;

server ip-from-app-server2:3000;

}

server

{

listen 443 ssl;

listen 80 ssl;

server_name app.my-app.com;

location / { proxy_pass http://app; }

}

Then, restart the web server service on this new server by executing `service nginx restart`, and repeat this for the original web server that we previously created. This is a good point to take a new snapshot of the server as well.

Adding the new web server

Next, head over to your DNS provider and just add the new IP to the same record.

Mirroring the database

As a final safety net, we’ll set up the database in an active/standby configuration. This article from Digital Ocean provides a good tutorial.

Keeping the bad guys at bay

Next we’ll make sure that we can restrict access. For this we’ll use UFW so that we can set up a very basic firewall on each server. Note that you may want to look into additional security provisions to fit your individual needs before heading into production.

apt-get update -y

apt-get install ufw -y

To configure UFW, we’ll just need to SSH into each of our three different machine types and enable the next layer to access it, in addition to the SSH port (so we can access it):

Database

ufw default deny incoming

ufw default allow outgoing

ufw allow ssh

uwf allow from ip-from-app-1 to any port 5432

uwf allow from ip-from-app-2 to any port 5432

ufw enable

Application server

ufw default deny incoming

ufw default allow outgoing

ufw allow ssh

uwf allow from ip-from-webserver-1 to any port 3000

uwf allow from ip-from-webserver-2 to any port 3000

ufw enable

Web Server

ufw default deny incoming

ufw default allow outgoing

ufw allow ssh

uwf allow https

ufw enable

Now we’re good to go! We’ve defined specific IPs that can access specific ports and things are becoming more secure. For a deeper dive into UFW, check out this article. To keep going from here, a great next step would be to look into VPN solutions in order to secure your SSH access.

Worth the investment

If for some reason one of your web servers dies, Amazon Route 53 will automatically redirect traffic to the other web server. If one of the application servers dies, the NGINX layer will redirect the necessary traffic as well. And if application itself dies on a server, then PM2 will take care of restarting it. Pretty awesome stuff!! 🙂

What’s even better is that if you get a huge amount of load, you can easily add capacity. And do so at an extremely reasonable price. It’s by far the best of both worlds in my opinion!

Now you have complete control over your application and environment variables, access to real time information, and you can track down user issues a heck of a lot faster within a centralized location. All for under $40 a month!

Obviously there are more considerations to be mindful of around security, monitoring, and automated deployments. We’ll be happy to touch on those areas as well within a future post. In the meantime, we invite you to go check out Wittified’s add-ons portfolio on the Atlassian Marketplace which includes both Server as well as Connect-based offerings. As we mentioned above, every situation is different. Like anything else, there’s no single answer that covers every scenario when picking your path for developing a new Atlassian add-on.

Hopefully our experience and perspective with Connect simply highlights it as a compelling opportunity for developers. One that’s worth a little up front effort in exchange for a whole bunch of long term benefit!